Criteria in evaluating AI agents – beyond the chatbot hype

12 min read

—

According to MIT NANDA 2025, 95% of GenAI pilots fail to achieve rapid revenue acceleration. One of the main reasons is a learning gap: AI tools are introduced, but they fail to adapt to existing workflows and operational realities.

Part of the problem lies in how teams approach evaluating AI agents.

Watch a demo → check a feature list → run a basic Q&A accuracy test → and sign the deal.

Six months later, the pilot is quietly shelved, and leadership is back to asking the same question: where is the ROI?

The issue is not just the technology. It’s the evaluation method.

This guide introduces a practical rubric for evaluating AI agents across functions like Sales, Customer Support, and RevOps, along with the CIOs and CTOs responsible for deploying them.

It’s built around one core idea: an AI agent should be interviewed like a senior AE or CSM – not benchmarked like a chatbot widget.

TL;DR

- Most "AI agents" are still glorified search bars – they retrieve documents, draft summaries, and stop there. Real agents must deliver clear answers and actions, not just search results.

- 5 criteria separate a real Gen-3 agent from a chatbot with better marketing:

- Architecture

- Agency

- Governance

- Orchestration

- Transparency

- Use the scorecard and 15-question interview guide at the end to evaluate any vendor, including building in-house.

Five criteria for evaluating AI agents – beyond chatbot benchmarks

Most guides obsess over accuracy benchmarks, latency, and token cost.

AI agents that carry your pipeline, renewals, and support backlog live or die on five deeper criteria.

- Architecture – knowledge graph or hallucination machine?Test: Can the agent trace a service owner through a live incident – and show its reasoning path?

- Agency – system of action or expensive search bar?Test: Can it close a Jira ticket or process a refund end-to-end, without a human touching it?

- Governance – permission-aware or prompt-injectable?Test: Are permissions enforced at the data-node level (RBAC/ABAC), not just via prompt filters?

- Orchestration – specialist swarm or confused "god bot"?Test: Can one agent hand off to another – say, Support to Sales – with full shared context?

- Transparency – white-box reasoning or black-box guessing?Test: When an agent denies a refund or flags a renewal as at risk, can it show the full audit trail and the decision-making reasoning?

Why 95% of AI pilots fail – and how front-office teams avoid being one of them

The data tells a clear story. MIT NANDA 2025 analysed 150 interviews, 350 surveys, and 300 deployments: 95% of GenAI pilots fail to deliver P&L impact. Gartner projects 40%+ of agentic AI projects will be cancelled or stalled in the near term. In 2025 alone, 42% of enterprise AI pilots were abandoned – up from 17% the year before.

Three root causes show up in every failed pilot:

- Wrong expectations. Teams buy chatbot-style tools and expect agent-level outcomes. A "smart search bar" won't fix renewal risk, ticket backlog, or pipeline clarity – no matter how good the demo looks.

- Wrong evaluation criteria. Over-indexed on answer fluency and interface polish. Under-indexed on architecture, agency, and governance – the things that determine whether an agent can actually finish work.

- Risky DIY builds. RAG stacks with no RBAC, no observability, and no concept of accounts or opportunities. MIT found vendor platforms succeed 67% of the time vs only 33% for internal builds – but only when buyers evaluate the right things.

Vendor-built platforms tend to outperform DIY builds – but only when buyers test for the right things. That's the whole point of this guide.

Think of it this way. You wouldn't hire a senior AE just because they interview well. You'd look at how they handle a renewal under pressure, an escalation with no context, or a complex multi-stakeholder deal.

AI agents are no different. The interview framework below gives you exactly those tests.

Recommended read: What it takes to build enterprise-grade AI agents

1) Architecture: unified customer memory vs hallucination machine (the brain test)

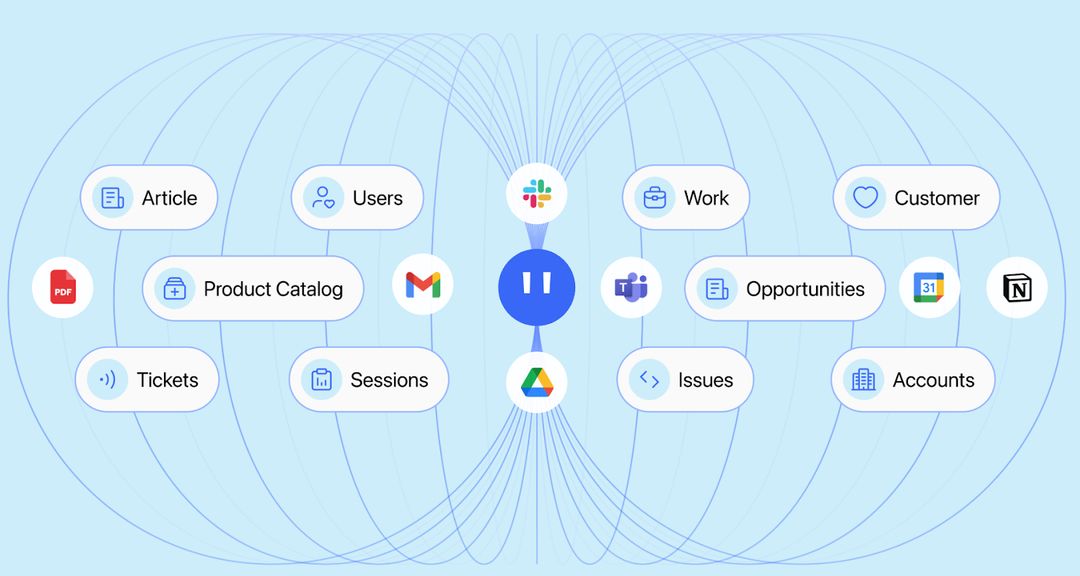

Architecture is how your agent stores and reasons over truth. For revenue and support teams, that means a living customer memory – accounts, tickets, conversations, product usage – not just a pile of vector-indexed documents.

Most AI agents sit on basic RAG: vector search over unstructured files with no entity model for accounts, opportunities, or tickets.

The risks are real: hallucinated escalation owners, answers based on stale playbooks, wrong contacts routed to wrong teams, and decisions made off outdated PDFs that haven't been touched in six months.

What good looks like:

- Nodes: accounts, contacts, opportunities, tickets, services.

- Edges: relationships that let the agent reason, not just retrieve. This ticket → this feature → this account → this renewal.

- Enables questions like "What changed for this customer in the last 30 days?" or "Which renewals with open P1 tickets are most at risk this quarter?"

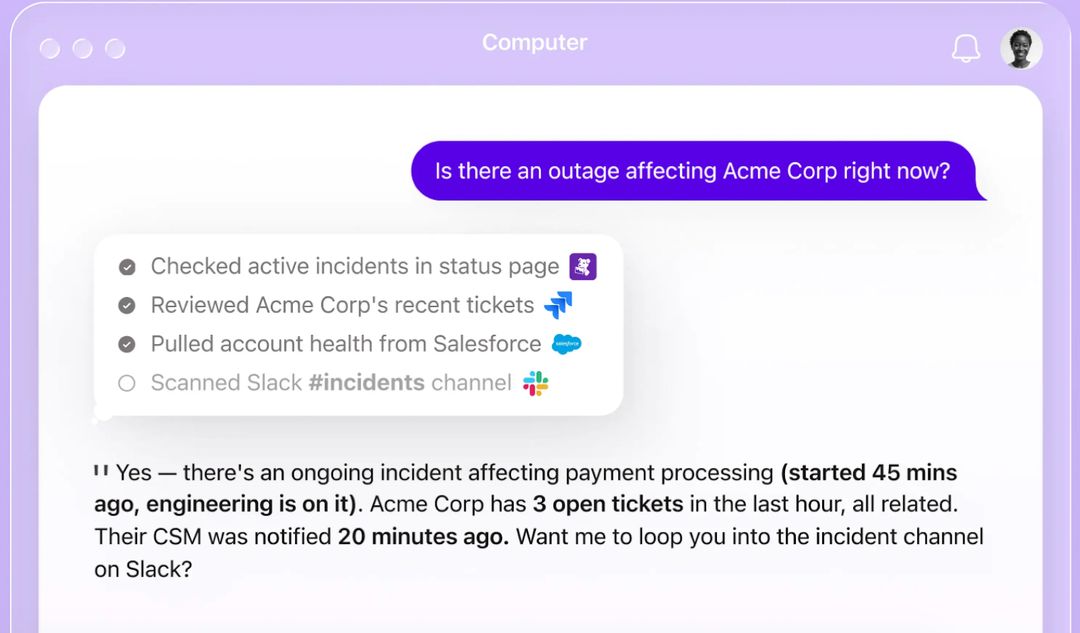

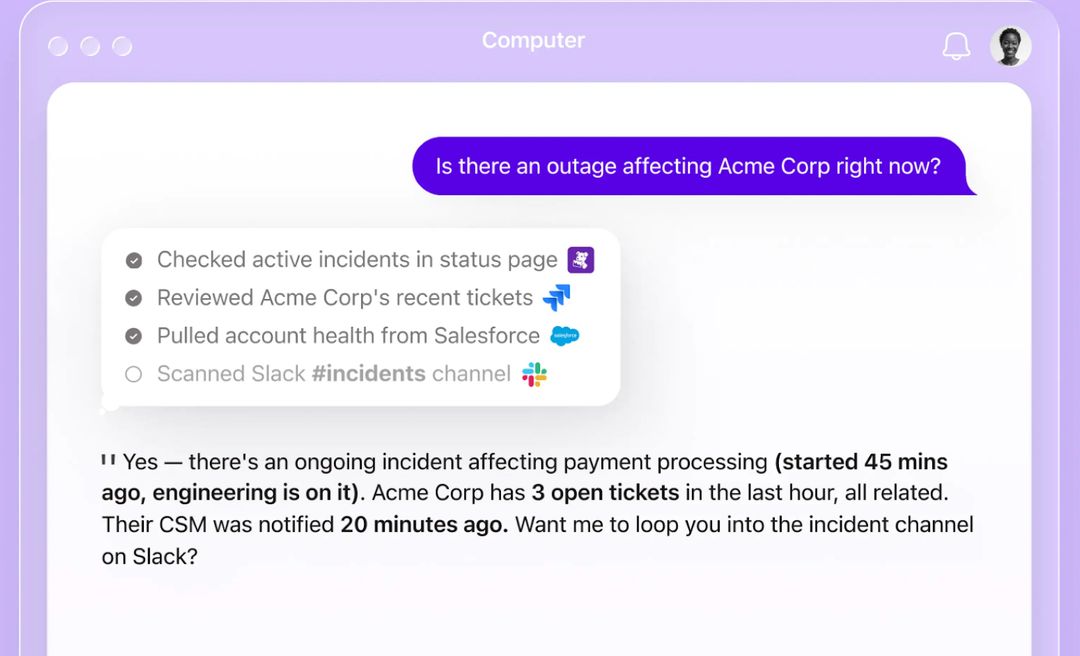

The agent interview test: "Prep me for the Acme QBR. Show me recent issues, usage trends, and sentiment – and explain how you got that view."

A real agent traverses the graph and shows its work. A basic RAG tool summarises a PDF and hopes for the best.

How Computer, by DevRev, approaches this: Computer Memory is a permission-aware knowledge graph of your customers, built continuously across CRM, support tools, communications, and product data. It is a unified data layer that connects structured and unstructured data.

It's why Computer can answer account-level questions with evidence – not because it's searching harder, but because it actually knows your customer.

2) Agency: system of action or expensive search bar? (the hands test)

Agency is an agent's ability to not just find information, but to take governed actions across your systems on behalf of your team.

Many "agents" still stop at drafts. They generate an email, suggest a next step, or "recommend" a refund. Then a human clicks through three systems to make it happen. Pilots look impressive in demos. They don't move real KPIs.

Real agency across front-office workflows looks like this:

- Support: validate policy, process an SLA credit, update ticket status, and notify the CSM – no manual steps.

- CS: log QBR outcomes, update renewal date and risk level, create follow-up tasks.

- Sales: update opportunity stage, close date, forecast category, and next steps after a call ends.

What good looks like:

- Two-way sync into CRM and support tools – read and write.

- Full CRUD access with clear policies and approval flows where needed.

- Actions logged, reversible, and explainable.

The agent interview test: "Show me an end-to-end flow: a customer requests an SLA credit, the agent validates the policy, applies the credit, updates the ticket and CRM, and notifies the AE. No manual clicks."

If the demo stops at "here's a draft email," you're looking at a search bar with better branding.

How Computer approaches this: Computer is a system of action for Sales, CS, and Support teams. Through Computer AirSync – a two-way sync engine - it can update CRMs, close support tickets, draft emails and send follow-ups, and log outcomes, with human-in-the-loop controls so your team stays in charge.

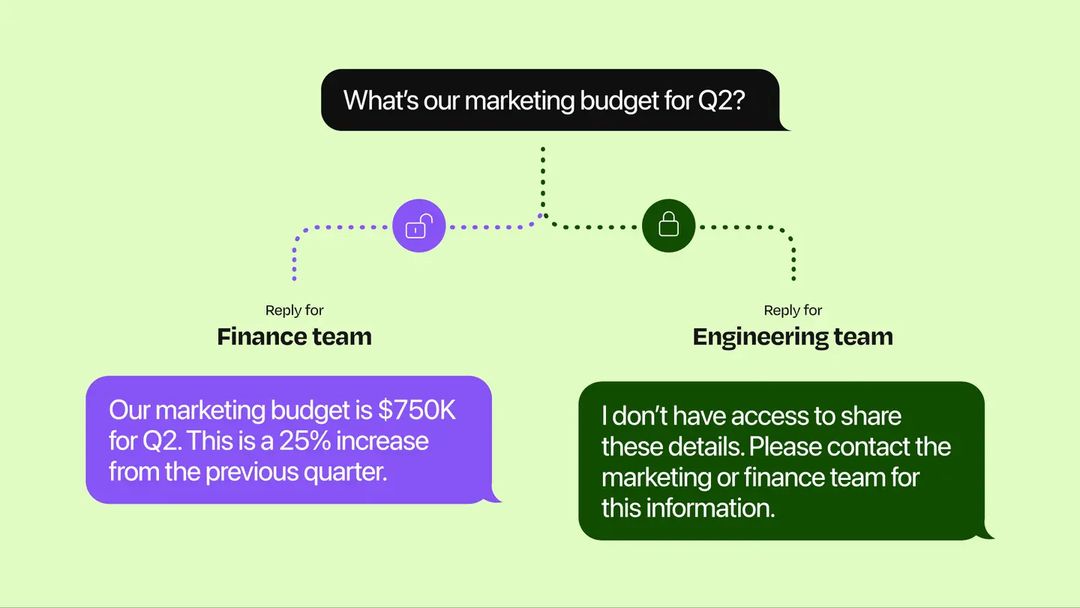

3) Governance: permission-aware or prompt-injectable? (the guardrails test)

Governance is how an agent respects who can see, change, and approve customer data, down to individual records and fields.

Prompt filters and "don't say X" instructions are not governance. They're suggestions. A determined user – or a misdirected agent – can bypass them easily. What you actually need is permission enforcement that mirrors your existing systems of record: row-level, field-level, and role-level.

The risks of skipping this are concrete:

- Renewal terms or exec pricing accidentally surfaced in a "helpful" answer.

- A junior rep is accessing contract details they have no business seeing.

- Audit logs that can't explain why sensitive data was returned.

What good looks like:

- Real-time sync with your identity and access layer (Okta, Azure AD, CRM roles).

- Row- and field-level controls that follow the user's existing permissions.

- Clear separation: what agents can read vs write, with approval gates where warranted.

The agent interview test: "As a junior CSM, ask the agent for executive pricing or comp data. What happens?"

A permission-aware agent refuses and explains why. The audit log shows a blocked attempt. A prompt-filter system might surface the data anyway if the question is rephrased.

How Computer approaches this: Computer inherits and enforces permissions from your systems of record. This is knowledge graph governance at the data layer – not prompt-level rules. Every response is scoped to what that user is actually allowed to see – no exceptions, no prompt overrides.

4) Orchestration: specialist swarm vs one bot to rule them all (the team test)

Orchestration is how multiple agents collaborate like a team – each owning a slice of work, sharing context, and handing off cleanly.

A single "god bot" bolted onto everything becomes brittle fast. It tries to be a support agent, a sales assistant, a data analyst, and a compliance tool all at once. The result is shallow in every direction and impossible to debug when something goes wrong.

Real revenue workflows cross team boundaries:

- Support detects a pattern of P1 tickets that signals churn risk.

- That risk needs to surface to CS and Sales with full account context.

- Sales needs to know the history, sentiment, open issues, and a suggested next step – not just "FYI: customer is unhappy."

What good looks like:

- Dedicated agents for support triage, escalation analysis, account health, renewal risk, and sales follow-up.

- Shared customer memory so context follows the customer, not the channel.

- Clean hand-off protocols and state tracking across the workflow.

The agent interview test: "When support detects an upsell or renewal risk, show me exactly how that context moves to sales. What does the AE actually see?"

If the answer is a Slack message with no structure, or nothing at all, that's not orchestration.

How Computer approaches this: Computer for Customer support teams and Computer for Sales share the same customer Memory. When a support signal becomes a revenue signal, the AE receives the full account picture – history, sentiment, product usage, and recommended next steps – not a stripped-down alert.

5) Transparency: white-box reasoning or black-box guessing? (the audit trail test)

Transparency is your ability to see what an agent did, why it did it, and which data or policies it relied on.

Black-box decisions are a trust problem before they're a compliance problem. If your team can't see why an agent flagged a renewal as safe, or denied a refund, or escalated a ticket, they won't trust it. And if they don't trust it, they won't use it. That's the adoption crater most AI pilots fall into.

What good looks like:

- Per-action logs: inputs, tools called, outputs, user or agent identity, and approvals.

- Human-readable reasoning summaries, not just system traces.

- Exportable views for internal audit and external compliance (EU AI Act, SOC 2, etc.).

The agent interview test: "The agent downgraded a renewal risk from 'high' to 'medium' last week. Show me exactly why."

A transparent agent shows the data it used, the signals it weighed, and the reasoning path. A black-box agent says "I updated the risk score" and offers nothing further.

How Computer approaches this: Every Computer action surfaces a reasoning chain. You can see which sources were used, which permissions were checked, and what logic produced the answer – so your team can act with confidence, and your CIO can sleep at night.

The AI agent scorecard for your revenue and support org

Use this table when comparing vendors or evaluating a DIY build. Score each option against where it actually sits – not where the sales deck says it sits.

Gen-3 is where an AI agent stops being a tool and starts working like a teammate – one that knows your customers, acts in your systems, and earns your team's trust over time.

The agent interview guide – 15 questions to ask every vendor

Use these in your next vendor call or PoC. They're designed to surface real capability, not demo theatre.

Architecture (the brain test)

1. Show me how your agent answers "What changed in this account in the last 30 days?" – and explain how it got there.

2. Do you use a knowledge graph or an entity model? How is it built and kept current?

3. How do you reduce hallucinations beyond prompt engineering?

Agency (the hands test)

4. Which systems can your agent write back to today, without human clicks?

5. Show me a complete refund or SLA credit workflow, end-to-end, no manual steps.

6. What guardrails prevent unsafe or unauthorised actions?

Governance (the guardrails test)

7. How are permissions enforced – at the prompt level or at the data level?

8. If I show you our Salesforce roles, can you guarantee the agent respects them at field level?

9. What does your audit log show when a permission violation is attempted?

Orchestration (the team test)

10. How does context move between a support agent and a sales agent in your system?

11. What does the hand-off package look like when a support ticket becomes a sales opportunity?

12. Can agents from different workflows share a unified view of the same customer?

Transparency (the audit trail test)

13. Show me the full reasoning trace for a decision your agent made last week.

14. How do you surface agent reasoning to end users in plain language?

15. Can you export audit logs in a format our compliance team can use?

You can use this same list to test Computer against your real renewals, tickets, and pipeline – with your own data, not a canned demo.

Stop evaluating how well it chats – start evaluating how much work it finishes

Most AI evaluations today still ask the wrong question: "How good is the answer?"

For revenue and support teams, the real question is: “How much work does it actually finish – safely, accurately, and with my team’s trust?”

The gap between those two questions is where most AI budgets disappear.

An AI agent shouldn’t be tested like a chatbot. It should be evaluated like a teammate. You’d want to see how it handles a renewal under pressure, how it escalates a P1 without losing context, and whether it updates the CRM after a difficult call without being asked.

In other words, the best AI agent isn’t the one that talks the best. It's the one that quietly closes the most loops – on renewals, on tickets, on customer follow-ups that would otherwise fall through the cracks.

Use the scorecard and interview framework above in your next PoC. Bring your hardest questions. Test the agent against your real accounts, real pipeline, and real escalations.

Put Computer, by DevRev, to the test. Book a session where you interview Computer's capabilities on your terms. Bring your questions. We'll bring the answers.

Frequently Asked Questions

Computer+ Apps

Our customers

Resources

Initiatives