Best AI agents for customer support: the enterprise buyer’s guide for 2026

11 min read

—

Neelabja Adkuloo

Member of marketing staff

Your customer gets double-charged. Your support agent opens the AI assistant, which drafts a flawless apology, but can't issue the refund, update the plan, or log a case for finance.

The agent does it all manually, the customer waits, and a tool that looked impressive in the demo turns out to be a nicer search box.

This is why finding the best AI agents for customer support is harder than it looks.

A 2025 Gartner survey reports 77% of support leaders are increasing AI spend, but a large share still report mixed results on resolution rates and customer satisfaction.This guide gives you a hard‑nosed way to tell whether you’re buying a slightly smarter FAQ bot or a real enterprise AI support platform that can close tickets and cut handle time.

What makes the best AI agent for customer support?

- Architecture: Uses a knowledge graph, not just RAG, to reason across relationships.

- Agency: Reads, writes, and interacts with different tools across your tech stack.

- Integration: Connects support, engineering, and product so customer‑reported bugs get fixed.

- Governance: Enforces role-based data access at the platform layer, not via prompts.

- Intelligence: Tracks resolution and CSAT, not only deflection rates.

- Orchestration: Coordinates specialist agents with shared context instead of one generic bot.

- Transparency: Gives full audit trails for SOC 2 and EU AI Act compliance.

3 generations of customer support AI: chatbots, copilots, and enterprise agents

Before you compare the best AI agents for customer support, you need to know which generation you’re actually buying.

Most ‘best AI agent’ lists compare Gen 1 and Gen 2 tools side by side. This guide focuses on what separates Gen 3 enterprise agents from everything else, and gives you a framework to evaluate any vendor.

📖 Deep Dive: Still using legacy deflection tools? Read our guide on AI Agent vs. Chatbot: The Shift to Resolution in 2026.

Why majority support teams are paying for AI that doesn’t work

- Level AI’s 2024 State of AI in the Contact Center report shows 100% of contact center leaders are considering new AI tools, yet 70% say pressure to adopt has increased faster than their ability to operationalize them.

- At the same time, Gartner expects agentic AI to autonomously resolve 80% of standard customer service queries by 2029, with around a 30% reduction in service costs. The future is clear; the present is stuck.

On top of that, frontline reality hasn’t changed much:

- Over half of service professionals report burnout and say workloads are more complex than a year ago, according to Salesforce’s State of Service Report.

- Many agents report they lack easy access to customer context, which directly leads to bad experiences and escalations.

Comment

by u/thepillowco from discussion

in SaaS

The best AI agent isn’t the one with the most features. It’s the one that actually resolves tickets, connects to your systems, and doesn’t leak your data.

7 criteria for choosing a customer support agent that actually resolves tickets

Criterion #1: Architecture (what powers the brain?)

Problem

Most AI support tools run on retrieval‑augmented generation (RAG): the model searches relevant documents (usually help centre articles), then drafts a reply based on what it finds.

RAG works well for static FAQs, but it breaks down for real‑world support:

- Customer asks: “Why did my renewal price go up?”

- RAG agent pulls the generic pricing FAQ.

- The real answer lives in a chain: Customer → Contract → Tier Change → Billing Rules → Discounts.

To reason over that chain, you need a knowledge graph, not a pile of documents. A knowledge graph links entities (customers, products, tickets, contracts, engineers) and their relationships, so the AI can follow the actual data path that led to a result.

A 2025 research on hybrid RAG + knowledge graph setups shows hallucinations dropping by roughly 40%, and up to a 73% reduction versus a plain LLM baseline in some multi‑agent frameworks.

What to look for

- Explicit mention of a knowledge graph or graph database.

- Ability to explain why a specific outcome happened, not just repeat policy text.

- Stable behaviour when information is spread across systems, not one doc.

Red flag

- If a vendor’s architecture story is “we use vector databases and RAG over your knowledge base,” that usually means the system is pattern-matching against past text instead of reasoning.

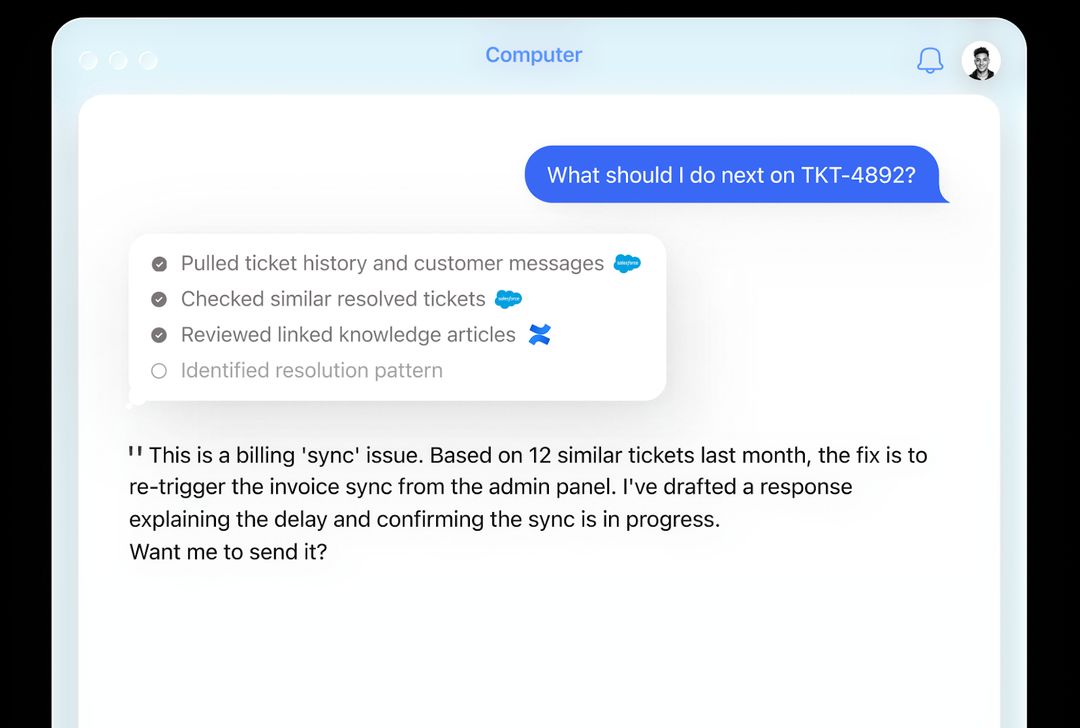

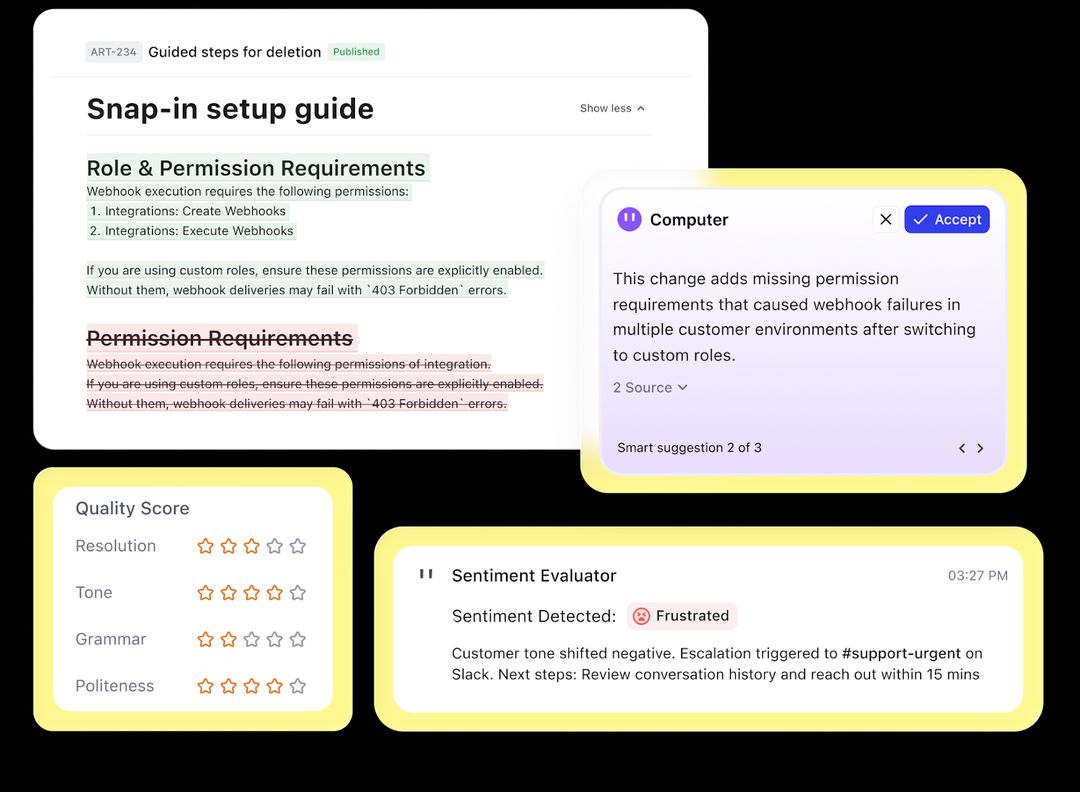

Computer, by DevRev, uses Computer Memory, a permission‑aware knowledge graph that stores customers, tickets, work items, products, revenue, and more as interconnected nodes. Instead of just searching documents, Computer operates inside this real‑time graph, so when you ask “Why did this renewal change?” it can walk the relationships, analyze the cause, provide an accurate response, and safely update the right records.

Criterion #2: Agency (can it actually execute?)

Problem

Many tools marketed as the best AI agents for customer support are still glorified copilots. They can read tickets and draft replies, but they can’t process refunds, update CRM records, or create or update engineering tickets without a human stepping in.

A true AI support agent should pass a simple three‑level test:

- Read: Can it pull information from multiple systems (CRM, billing, product logs)?

- Write: Can an AI agent write back and not just suggest an update?

- Act: Can it do both without human approval for routine actions?

Comment

by u/NeyoxVoiceAI from discussion

in SaaS

What to look for

- AI-powered customer service with 2-way sync with your existing stack so changes in one system automatically reflect in others.

- Full CRUD operations (create, read, update, delete), not just read-only access.

- Workflow triggers that execute actions like support ticket automation end-to-end, not just suggest what a human should do next.

Red flag

- If a vendor says, “Our AI drafts replies for your agents to approve,” you’re looking at a copilot, not an agent. It may be useful, but it’s still assistive, not autonomous.

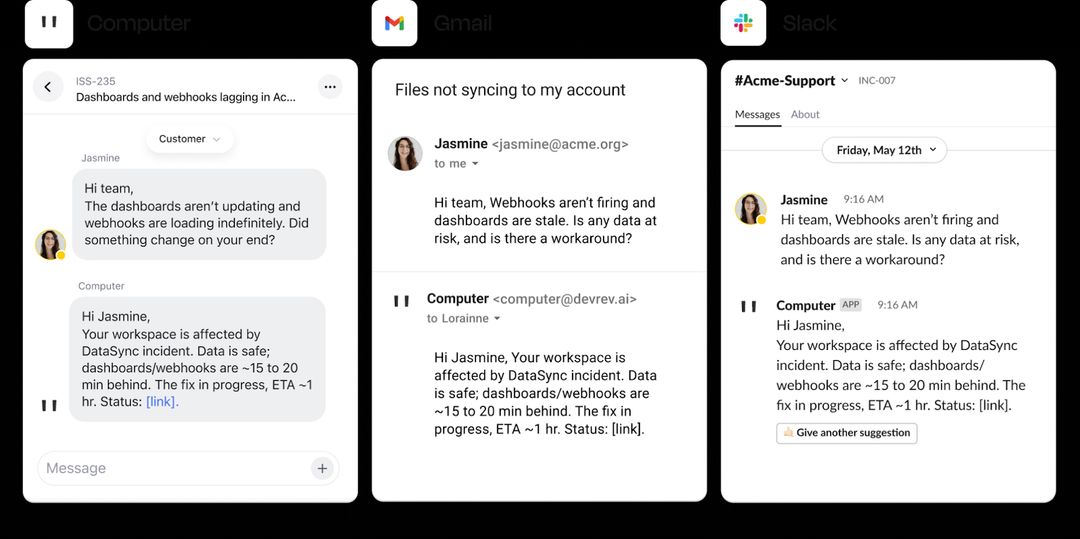

Computer pairs Computer Memory with AirSync, a 2-way sync engine that reads and writes to systems in your tech stack, like CRM, helpdesk, project management tools, and Slack in real-time so the agent can actually do work, not just talk about it. One action, all systems updated.

Criterion #3: Integration (does it connect support to engineering?)

Problem

Most AI support agents live inside the help desk. They can see tickets and knowledge articles, but they can’t see or shape what happens in the product backlog. Support teams end up treating symptoms (alerts, angry emails, repeat tickets) while engineering quietly works on root causes in a separate system. The result is predictable: the same bugs surface as tickets again and again.

Teams that have AI support agent integration into engineering see a different curve.

Bolt unified roughly 200,000 historical tickets and its product backlog with DevRev, cutting ticket resolution times by more than 40% and improving customer retention by 25%. A big driver was smarter routing: instead of misdirected tickets bouncing around, AI could link issues straight into the support-engineering alignment layer, creating a shared view of bugs, impact, and progress across both teams.

The migration was seamless and efficient, and the DevOps side was notably easy. Within just two weeks, we successfully imported around 200,000 Zendesk tickets and 800 knowledge base articles along with 12 workflows.

Elec Boothe

Director of Support Engineering & Risk, Bolt

What to look for

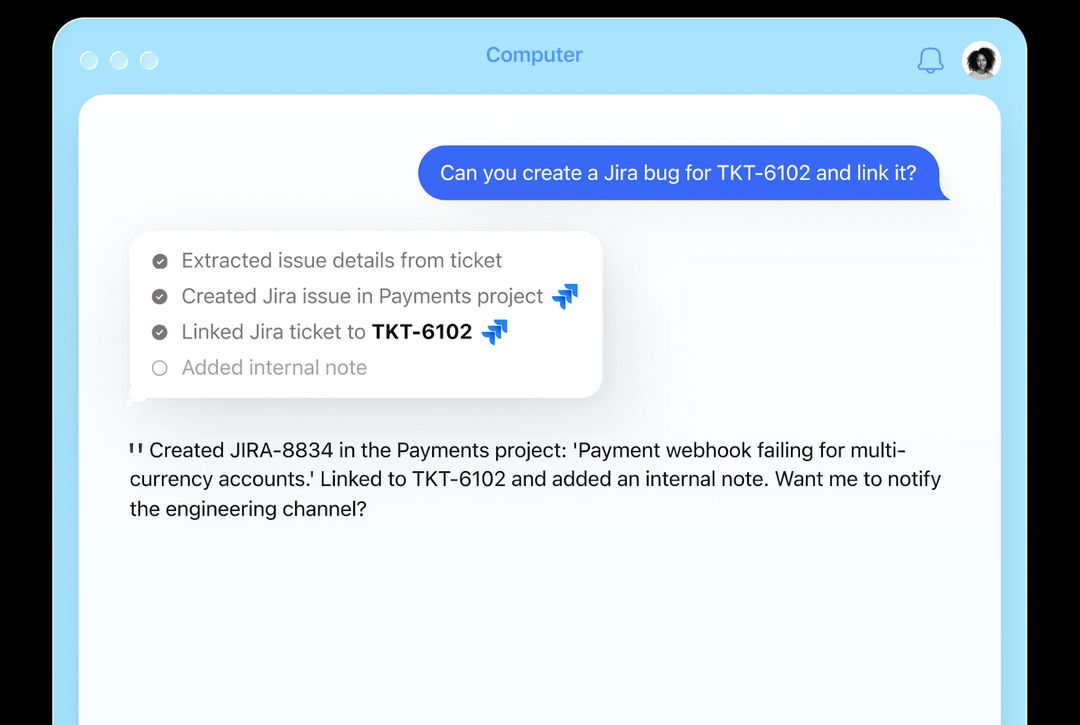

- Native integrations with engineering tools like Jira, GitHub, Linear, and incident managers, not just email or Slack.

- The ability for the AI to automatically escalate a product bug from a support conversation, with full context from the customer feedback loop.

- When a fix ships, affected customers are automatically identified and notified, and related tickets are updated.

Red flag

- When a vendor says, “We integrate with Slack for notifications.” Alerts alone are not integration. If the AI can’t create, update and track real engineering work items, you still have a human doing the glue work between tools.

Computer’s unified data layer – support tickets, engineering work items, and customer data – live in the same knowledge graph. When a customer reports a bug, Computer doesn’t just file a ticket. It links to the engineering backlog, checks if a fix is in progress, and tells the customer the timeline automatically.

Criterion #4: Governance (is your data safe?)

Problem

AI customer support security now sits right on top of your customer, product, and revenue data. If data governance is weak, that’s a breach headline waiting to happen.

IBM’s 2025 Cost of a Data Breach report found that 1 in 5 organizations experienced an AI-related breach, and those incidents cost on average $670,000 more than standard breaches. A follow‑up analysis notes that 97% of AI‑related breaches lacked proper access controls, meaning the AI layer could see more than it should. At the same time, LayerX reports that 77% of employees paste data into GenAI tools, and 82% of that happens from personal, unmanaged accounts – a massive blind spot for data leakage.

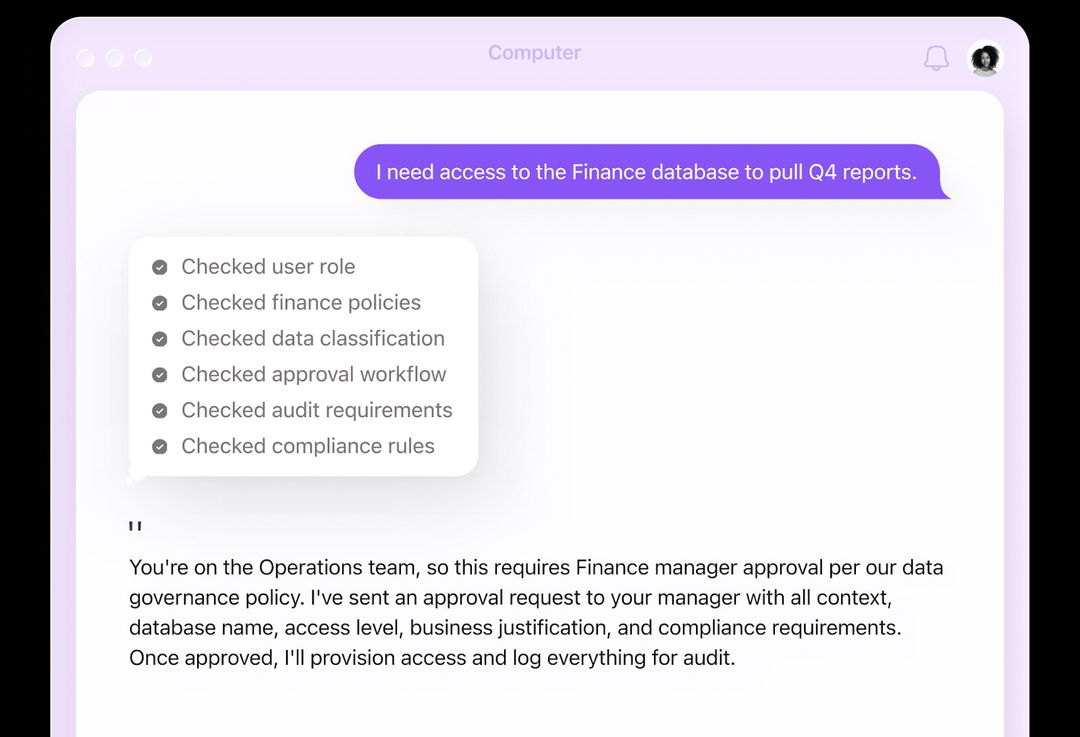

A simple test during evaluation of best AI for customer service: log in as a junior support rep and ask the AI agent for executive salary data or board‑level contracts. A robust system should block this at the data layer using role‑based access control (RBAC), not just say “I can’t answer that” because of a prompt‑level guardrail.

What to look for

- Node‑level AI agent RBAC inherited from your source systems (e.g., Salesforce, Jira) so the AI never sees what the user cannot see.

- Clear certifications and controls: SOC 2, GDPR, HIPAA where relevant, plus documented AI governance policies.

- Full audit logging of AI actions and data access: who asked, what was accessed, what changed.

Red flag

- If a vendor’s main reassurance is “our AI has guardrails,” you’re still exposed. Prompt‑level guardrails can be bypassed or jailbroken; data‑level permissions cannot.

Computer Memory, DevRev’s knowledge graph, is permission‑aware by design: it inherits access controls from your systems of record, then enforces those permissions at the graph level. If Salesforce says a given rep cannot see executive data, Computer’s enterprise AI agents never see it either – which means they cannot leak it in a response, no matter what prompt they receive.

Criterion #5: Intelligence (resolution or just deflection?)

Problem

Most dashboards celebrate deflection rate i.e. how many tickets the AI handled without a human. That’s a vanity metric if you’re not also tracking resolution quality and customer satisfaction. Resolution is different: the issue is closed, the customer is satisfied, and no one has to contact you again.

What to look for

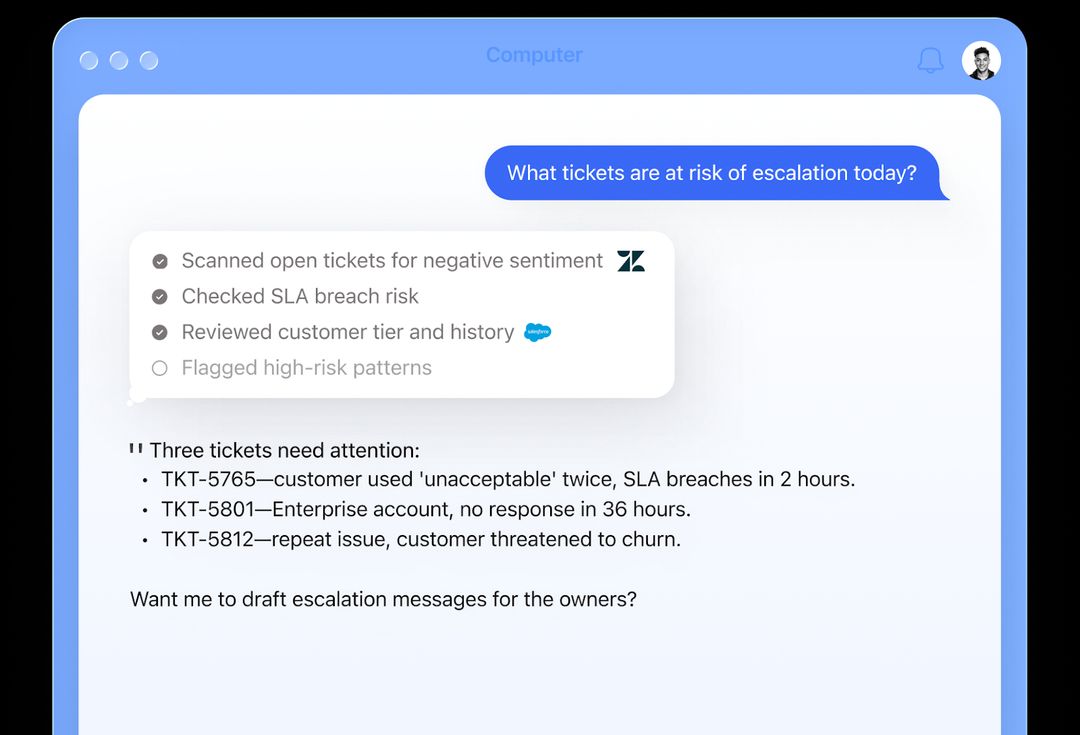

- Separate metrics for:

- AI deflection/automation rate (what never touched a human).

- AI resolution rate (issues solved end‑to‑end by AI with no follow‑up).

- CSAT or NPS on AI‑handled conversations vs human‑handled ones.

- Clear reporting on the types of issues the AI is allowed to resolve vs what it should escalate.

- Evidence from existing customers that human workload dropped and customer satisfaction held steady or improved.

Red flag

- If a vendor only shows deflection and can’t break out true resolution or CSAT on AI‑handled tickets, assume the bot is good at ending conversations, not at fixing problems.

Computer tracks customer support resolution and satisfaction together, not just how many tickets it touched. Its analytics show which issues the agentic AI resolved on its own, which ones it only assisted on, and which ones it escalated.

Criterion #6: Orchestration (one agent or a team?)

Problem

Most companies try to cram every use case into one god bot. It starts as a simple support bot and slowly absorbs billing, onboarding, internal Q&A, and more, until it becomes slow, hard to debug, and bad at everything.

On top of that, fewer than 10% of organizations that start with a single agent successfully make the leap to orchestrated multi‑agent systems, mainly because they lack a shared context layer and orchestration strategy.

What to look for

- Support for multiple, named agents that can hand off or collaborate on a conversation.

- A shared memory or context layer so agents see the same customer, ticket, and product state instead of working in silos.

- Clear routing and escalation patterns, like which agent owns what, and how the platform decides who should handle each step.

Red flag

- If the AI support platform only offers a single, monolithic bot that is supposed to do everything, or if multi‑agent just means multiple prompts inside one brain, you’ll hit complexity limits fast and end up back in god‑bot territory.

DevRev’s Agent Studio lets you define specialist agents for support, sales, engineering, success, and more, while Computer Memory acts as the shared knowledge graph they all draw from. That means a support agent, a billing agent, and an engineering agent can each do their part on the same issue, with shared context, fewer handoffs, and a single, auditable history of what happened.

Criterion #7: Transparency (can you audit its decisions?)

Problem

Under the EU AI Act, transparency and traceability become mandatory for many high‑risk and limited‑risk AI systems by August 2026. In parallel, SOC 2 and similar frameworks are evolving: auditors increasingly ask not just whether AI is controlled, but why did the AI make this decision, and can you prove it?

What to look for

- A built‑in decision log for each AI action, capturing what data it accessed, what intermediate steps it took, and what final decision it made.

- Tools to replay and inspect AI behavior for a given ticket, customer, or time window (including model version and prompts).

- Exportable evidence (logs, traces, reports) you can hand to Security, Risk, and auditors without manual reconstruction.

Red flag

If the vendor can’t show you, for a real ticket, exactly how the AI reached its conclusion, and instead talks vaguely about proprietary magic, you’ll struggle to satisfy AI Act transparency duties and SOC 2 style reviews.

A black box is a liability, not an asset.

Computer runs on a knowledge graph, enabling full traceability of every AI decision: it logs touched nodes (e.g., customers, tickets, features, incidents), followed relationships, executed rules/workflows, and sources/citations – creating a clear, human-readable reasoning trail for each resolution.

The AI customer service platform comparison matrix

Once you have this framework, you can compare vendors more clearly.

You can turn this into a scorecard and rate each AI agent for customer service against the criteria that matter most to your business.

How to evaluate your next customer support AI platform: interview checklist

Turn this into a live interview script for your next vendor call so you can pressure‑test any customer support AI platform.

Compare the best AI agents for customer support on fit, not just features.

Stop comparing chatbots. Start hiring an agent.

The best AI agents for customer support are not the ones with the longest feature pages or the flashiest demos. They are the ones that:

- Resolve tickets end‑to‑end, not just deflect them.

- Respect your permissions and give you an audit trail.

- Act like teammates you can trust, not black boxes you fear.

- Connect support to engineering and the rest of your stack.

Stop comparing chatbot flows and FAQ widgets. Treat your next AI purchase like hiring a key AI teammate, not adding a feature. If you want to see what a Gen 3 enterprise AI support platform looks like in practice, you can:

- See Computer resolve a real ticket in an interactive demo.

- Explore the Computer+ Support app to see how Computer Memory and AirSync work together behind the scenes.

Book a demo to get a 30-minute guided tour of Computer. Bring your toughest support scenario. We’ll resolve it live.

Frequently Asked Questions

Computer+ Apps

Our customers

Resources

Initiatives