The Main Thread Tax: IndexedDB vs. Our LCP - Part 1.

10 min read

—

Adit Shah

Member of Technical Staff

Category

Part 1 of 2: How client-side persistence broke our app before it saved it.

A 7-second Largest Contentful Paint is a dealbreaker for any product. Client-side caching is the standard remedy: persist API data to IndexedDB, restore on reload, skip the network round-trip. Every performance guide recommends this.

We implemented it and destroyed interaction latency instead. Clicks stopped registering. Text inputs lagged. Users told us the app felt broken, and they were right.

The root cause wasn't obvious. At production scale with hundreds of cached queries, INP spiked to 3 seconds on interactions that triggered cache writes - on modern machines. No external factors to blame. The problem was entirely self-inflicted: structured clone serialization on the main thread, blocking user input while the browser prepared data for IndexedDB.

A quick note on LCP: Largest Contentful Paint measures how long it takes for the largest visible element on a page - typically a hero image, data table, or key content block - to render. It's a Core Web Vital, and anything over 2.5 seconds is not considered good. In our case, the LCP element was usually data-driven: a dashboard widget or conversation list that couldn't appear until API data arrived.

This two-part series is the story of what went wrong, why the obvious solutions didn't work, and the architecture we eventually built to bring LCP P75 from 7 seconds to under 2.5 - without SSR, without degrading INP, and without blowing up memory.

In this first part, we'll cover the problem: what was actually slow, why our first attempt at caching made it worse, and why React Query's built-in persistence options didn't fit. In Part 2, we'll walk through the architecture that finally worked and the results it produced.

The application: authenticated data at scale

To understand why this problem was hard, you need to understand the kind of application we were dealing with. The performance strategies that work for simple apps behave very differently at this scale.

DevRev is not a marketing site or a simple CRUD app. It's a large product that combines CRM workflows, issue tracking, dashboards, analytics, and real-time conversations. Almost every screen is data-driven, and more importantly, what you see depends on who you are, which organization you belong to, and what permissions you have.

At any given time, the React Query cache holds hundreds of active queries. When a list API returns 50 items, we normalize the response into individual object-level queries for each item - so a single network request can produce dozens of cache entries. This is useful for consistency across views, but it means the query graph is large and deeply interconnected.

Users also frequently open pages in new tabs. That means full page reloads, not client-side route transitions. Every new tab goes through the full startup sequence: download, parse, execute, bootstrap, fetch data, render. The performance strategies that work within an SPA session - prefetching, route-level code splitting, stale-while-revalidate - don't help when someone reloads the app or opens it in a new tab.

Decomposing LCP: finding the real bottleneck

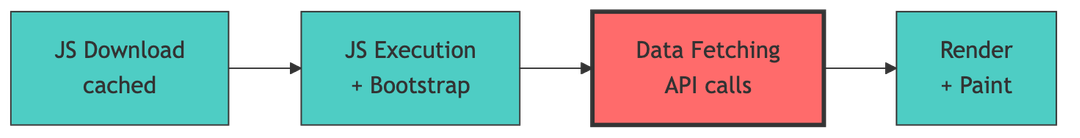

Before optimizing anything, we spent time decomposing what was actually contributing to LCP in a client-rendered SPA.

JavaScript download was usually fast – our assets were cached by the browser. JavaScript execution and app bootstrap mattered, but they weren't our biggest bottleneck.

What stood out very clearly was data fetching. The largest contentful element on most pages wasn't static markup - it was a data-driven component that couldn't render until multiple API queries completed. Even if rendering was instantaneous, we couldn't paint the LCP element until the data arrived.

This was a mindset shift for us. We didn't really have a rendering problem. We had a data availability problem.

Why SSR wasn't our answer: personalized, permissioned data

If you're thinking "just use SSR" – we get it. SSR is a strong LCP lever when you can cache the rendered output. For a marketing page or a product listing, SSR plus a CDN can serve pre-rendered HTML in milliseconds.

Our situation was different. Almost all data is authenticated and permissioned. What you see depends on your identity, your org membership, and your access level. The data changes frequently – think notification feeds and activity timelines. You can't serve the same pre-rendered page to different people, and the data changes too fast to cache server-rendered output for meaningful durations.

More fundamentally, our LCP element depended on data, not markup. Rendering HTML earlier on the server didn't help if the data the component needed still required per-user API calls after hydration.

To be clear - this isn't an anti-SSR post. For our specific constraints - authenticated, permissioned, personalized, frequently changing data - data availability on the client was the dominant bottleneck, not markup generation. Your mileage may vary.

Naive persistence: why structured clone broke INP

Once we identified data availability as the bottleneck, the next step seemed obvious. If data fetching is what delays LCP, cache the data on the client. Restore it on reload so the page can paint immediately while fresh data loads in the background.

We were already using React Query across the application, so we started with the standard approach: dehydrate the query cache, write it to IndexedDB, restore on page load, hydrate back into React Query. The page renders with cached data instantly, marks it stale, and refetches fresh data in the background.

What we expected: faster reloads, non-blocking data fetching, better LCP.

What actually happened: main-thread jank, long tasks during restore, and severely degraded INP.

This worked fine during development with a small number of queries. It broke once we rolled it out at production scale with hundreds of cached queries and deeply nested data structures.

Restoring the cached data caused visible jank. Clicks wouldn't register. Text inputs felt delayed. The app felt broken in a way that was worse than waiting for the data to load from the network.

To understand why, we had to look at what was actually happening under the hood.

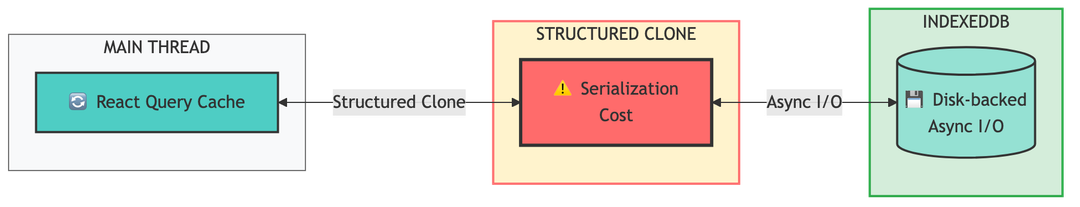

IndexedDB operations are asynchronous. But "asynchronous" only describes the I/O - the actual disk read and write. Before any data reaches IndexedDB, the browser must serialize it using the structured clone algorithm. And before restored data becomes usable JavaScript objects, the browser must deserialize it. Both of these operations run on the main thread.

For small objects, this is negligible. For a cache containing hundreds of queries with deeply nested, normalized data structures, structured clone becomes the dominant source of long tasks. At our scale, serializing and deserializing the full query cache produced long tasks in the range of 1-3 seconds as measured in the DevTools Performance panel - the main thread was visibly blocked, which is exactly what INP measures. The loader animation on screen would freeze mid-frame because the main thread couldn't run requestAnimationFrame callbacks while structured clone was executing.

The issue wasn't that persistence was a bad idea. It was that something fundamental about the cost model didn't scale. Persisting the entire cache, all at once, with all the serialization work happening on the main thread - that approach has a cost that grows linearly with cache size. And in a large application, cache size is not something you control.

Evaluating off-the-shelf: where React Query fell short

Before building our own solution, we evaluated React Query's official persistence options.

persistQueryClient, the standard plugin, dehydrates the entire cache into a single serialized blob and restores it as an all-or-nothing operation. This has the same structured clone cost problem we already encountered. It also couples gcTime with persistence duration - the docs explicitly state that gcTime should be set "as the same value or higher" than maxAge. If you want queries to survive on disk for 24 hours, you have to keep them in memory for 24 hours too. For an app with hundreds of queries, that's a significant memory cost.

experimental_createPersister, the newer per-query approach, was closer to what we needed. It stores each query individually and wraps queryFn to check storage before making a network request. But it has a fundamental coupling to the useQuery lifecycle that didn't work for us.

The persister wraps queryFn, which means it only runs when a component mounts with useQuery. If you populate the cache imperatively via queryClient.setQueryData() - which we do extensively for normalizing list responses into individual object queries - the persister never sees those entries. They never get stored.

The same limitation applies in reverse. If you read data via queryClient.getQueryData(), no restore happens, because the persisted queryFn wrapper never ran. Many of our patterns - prefetching, derived computations, imperative reads in event handlers - bypass the useQuery hook entirely.

There's also the restore ordering problem. When 200+ queries mount simultaneously on page load, each one independently reads from storage. That's 200+ separate IndexedDB reads, each triggering its own structured clone on the main thread, with no batching and no way to prioritize which queries load first. As we'll see in Part 2, these individual main-thread interruptions add up - even small reads fragment the critical path.

What we needed was something that captured everything in the cache (including setQueryData entries), persisted incrementally instead of as a full blob, restored selectively instead of all-or-nothing, and fully decoupled in-memory lifetime from on-disk lifetime. Five minutes of gcTime in memory to keep heap usage low. Forty-eight hours of persistence on disk to survive reloads.

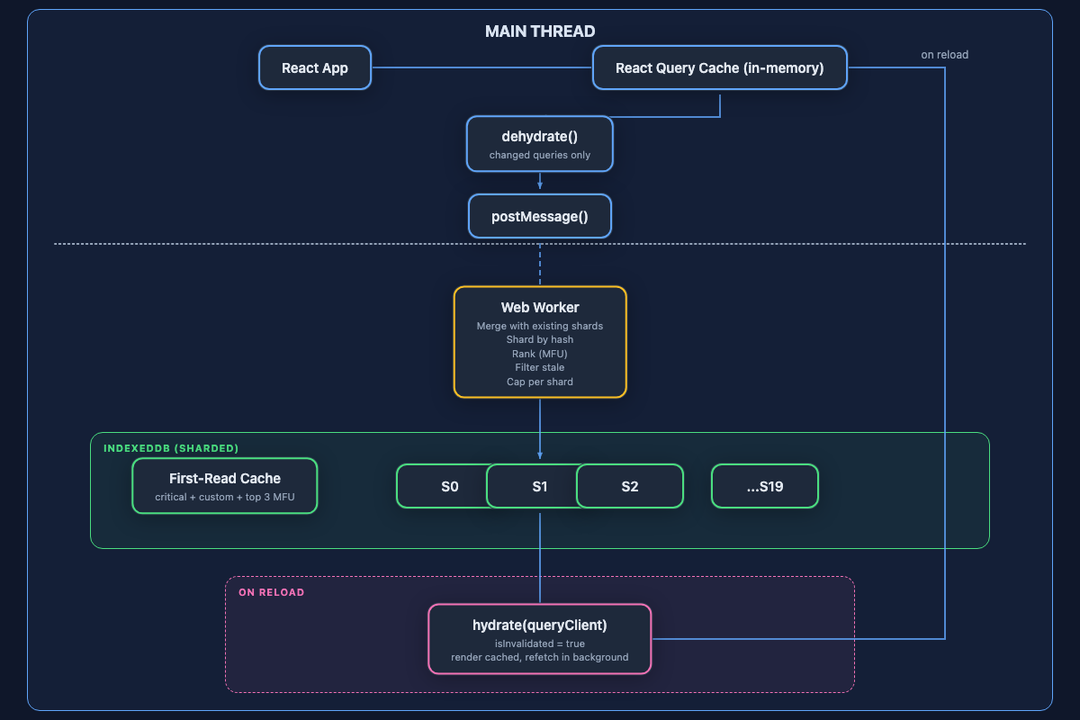

Architecture overview: Web Workers and selective restore

None of the existing options fit our constraints. So we built a custom persistence layer around React Query with three core design principles:

- All heavy work off the main thread. Merging, sharding, ranking, and writing to IndexedDB all happen inside a Web Worker.

- Persist incrementally. Only changed queries are sent to the worker. Cost scales with user activity, not cache size.

- Restore selectively. On reload, load only what the current page needs - not the entire cache.

Here's the architecture at a glance:

The React Query cache stays exactly where it was - it's still our in-memory source of truth at runtime. We didn't replace React Query. We built a persistence layer around it.

Instead of serializing the entire cache on the main thread, we dehydrate only the queries that changed since the last persist, send them to a Web Worker via postMessage, and let the worker handle all the heavy lifting: merging with existing shard data, distributing across shards, ranking by usage frequency, filtering stale entries, and writing to IndexedDB.

On reload, we don't restore everything. We read a single precomputed first-read cache from IndexedDB, optionally load route-specific shards, hydrate the queries into React Query, and mark them all as invalidated so they refetch in the background.

The main thread's job during persist is lightweight: dehydrate and diff. The main thread's job during restore is also lightweight: one IndexedDB read and one hydrate call. Everything expensive happens in the worker.

In Part 2, we'll go deep into each component of this architecture: incremental diffing, the sharding strategy, the tiered restore mechanism, and the first-read cache optimization - along with the production guardrails and results.

Related Articles

Alok Mishra

Ahmed Bashir

Akanksha Deswal

Nimit Savant

Computer+ Apps

Our customers

Resources

Initiatives