The Main Thread Tax: Fixing LCP Without SSR - Part 2

11 min read

—

Adit Shah

Member of Technical Staff

Category

Part 2 of 2: Incremental persistence, sharded caching, tiered restore, and the results.

In Part 1, we covered the problem: a 7-second LCP in a large authenticated SPA, caused not by slow rendering but by data availability. We showed why naive IndexedDB persistence made things worse - INP spiking to 3 seconds from structured clone serialization on the main thread - and why React Query's built-in persistence options didn't fit our constraints.

We ended with a preview of the architecture: a Web Worker handling all heavy work, sharded IndexedDB storage, and selective restore on reload. This post goes deep into each component, the production guardrails that made it reliable, and the measured results.

Making persistence cheap: incremental diffing

The first design constraint was clear: persistence cost cannot grow with cache size. It has to scale with how much actually changed.

The naive approach serializes the entire cache on every persist cycle. In our app, that's hundreds of queries - most of which haven't changed since the last persist. Sending unchanged data through structured clone, across the postMessage boundary, and into a merge operation is pure waste.

Instead, we track the dataUpdatedAt timestamp for every query we've previously persisted:

After the first persist, subsequent cycles typically send a small fraction of total queries - only those that received fresh data since last time. The diff itself is just a Map lookup per query: constant time, negligible cost.

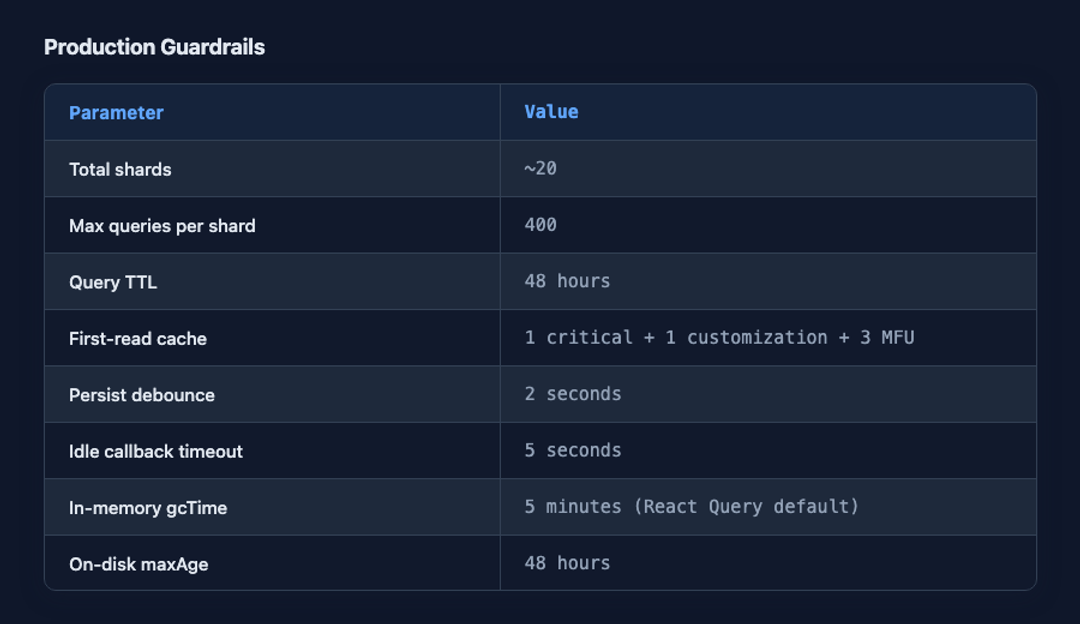

The persist path also has two layers of scheduling protection. Query cache change events are debounced (2 seconds), so rapid-fire updates from multiple API responses don't trigger individual persist cycles. And the actual persist work is wrapped in requestIdleCallback with a 5-second timeout, which defers the work to browser idle time while guaranteeing it still happens within a reasonable window.

One subtle detail: before sending queries to the worker via postMessage, we strip any non-serializable properties from query metadata. React Query allows attaching arbitrary meta objects to queries, and some of ours contained functions. The structured clone algorithm cannot clone functions, so we sanitize meta to keep only serializable values. Without this, a single query with a function in its metadata would crash the entire persist cycle.

Sharding: for restore, not storage

If we persisted all queries into a single IndexedDB key, restore would be all-or-nothing. Read the entire blob, deserialize it, hydrate everything. Even with incremental persist, we'd be back to a full structured clone on the restore path - which is the performance-critical path.

Sharding solves this by distributing queries across approximately 20 independent IndexedDB keys. Each shard is a self-contained unit that can be read and restored independently.

Queries are routed to shards based on the first element of their query key. We use a two-tier routing strategy:

Reserved shards for high-traffic routes. Shards 0 through 2 are permanently assigned to dashboard, notifications, and works queries respectively. All queries for a given route land in the same shard, which means loading one shard gives a route everything it needs. This is deterministic and requires no runtime ranking.

Hash-distributed shards for everything else. The first element of the query key is hashed using FNV-1a, and the result is mapped to one of the remaining shards. This ensures that related queries - those sharing a key prefix like accounts or conversations - naturally land in the same shard without explicit configuration.

Each shard is also bounded. A maximum of 400 queries per shard, enforced by evicting the least recently updated entries when the cap is exceeded. A 48-hour TTL per query, enforced during persist - the worker filters stale queries before writing. These guardrails are enforced at write time, not read time, so restore stays fast.

The result: restore cost is proportional to the number of shards you load, not the total amount of data stored on disk.

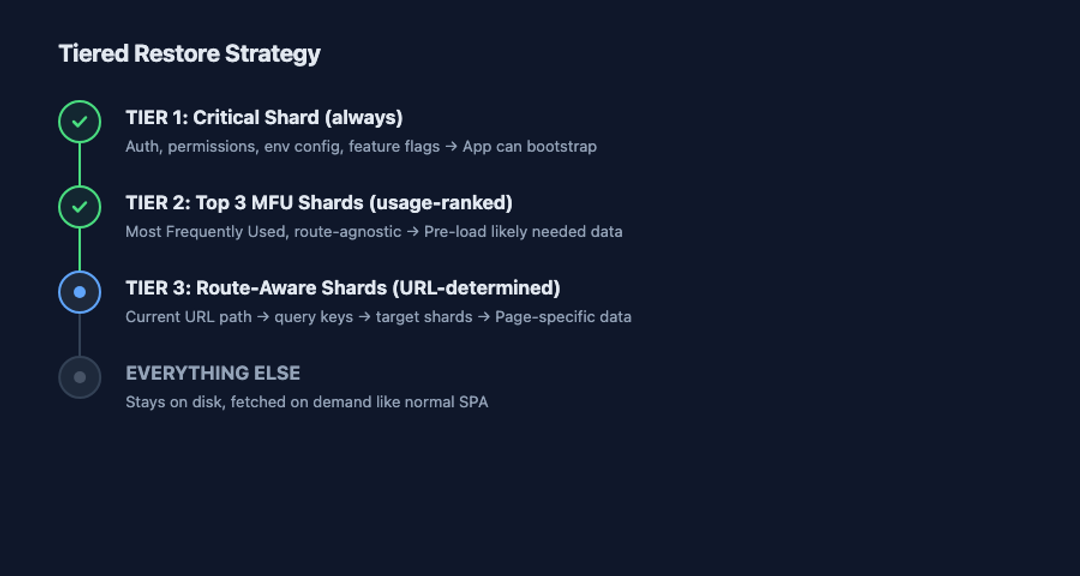

Tiered restore: loading exactly what you need

Sharding gives us the ability to load selectively. The tiered restore strategy decides what to load.

Tier 1 is the critical shard. It contains queries that every page needs regardless of route: authentication state, user permissions, environment configuration, feature flags. Without these, no page can render correctly. This shard is intentionally small - anything that isn't universally required does not belong here. If everything goes into the critical shard, you've reinvented the single blob.

Tier 2 is the top MFU shards - Most Frequently Used. During persist, the worker tracks how often each shard receives new queries and maintains a running count. On restore, we load the top 3 ranked shards. This is a heuristic, not a guarantee, but usage patterns turn out to be surprisingly stable in large applications. The same datasets tend to be accessed shortly after load, regardless of which specific page the user navigates to. The MFU count is intentionally route-agnostic: it doesn't try to predict navigation, it just observes what data the application actually uses most.

Tier 3 is route-aware restore. We maintain a lightweight mapping from URL path segments to query keys:

When the app loads at /org/works, we extract the path segment, look up the mapped query keys, compute which shards contain them (in this case shard 2), and load those shards. All queries for a route are co-located in the same reserved shard, so a single IndexedDB read gives the page everything it needs. This is the correctness layer - it guarantees the LCP element gets its data even if the MFU heuristic missed the right shard.

Every restored query is marked isInvalidated = true. This means components render immediately using cached data, while React Query silently refetches fresh data in the background. The user sees content instantly; the data updates transparently. This is stale-while-revalidate at the application level.

Everything not in these three tiers stays on disk. If the user navigates to a page whose data wasn't pre-loaded, it fetches from the network like a normal SPA. No penalty, no complexity - just the standard path.

The first-read cache: collapsing N+1 reads to one

Even with tiered restore, we were making multiple IndexedDB reads on the startup hot path: the critical shard, plus the top 3 MFU shards. Each read means scheduling an async operation, running structured clone, and touching the heap. Individually these reads aren't huge, but together they fragment the critical path into multiple main-thread interruptions.

The solution was to precompute a merged first-read cache during persist. The worker already knows which shards are critical and which are top-ranked. After writing individual shards, it reads back the top MFU shards, combines them with the critical shard, and writes the merged result as a single IndexedDB key.

On reload, restore becomes: one IndexedDB read, one structured clone, one hydrate. Route-aware shards are resolved after the first read and loaded additively if needed.

The win isn't fewer bytes - the total data volume is the same. It's fewer interruptions on the main thread at the moment that matters most.

We traded slightly more work during persist - which happens in a Web Worker, off the main thread, at a non-critical time - for dramatically less work during restore, which happens on the main thread at the most performance-sensitive moment of the application lifecycle.

Production hardening

The architecture described above is the steady-state design. Getting there required several weeks of production hardening after the initial rollout. A few patterns were critical.

Sequential processing in the worker. The worker processes persist messages one at a time through a promise queue. Without this, concurrent persist cycles would each hold copies of shard data in memory simultaneously, causing memory spikes. Between each shard write, the worker yields to the event loop (setTimeout(0)) to give the garbage collector an opportunity to reclaim memory from the previous shard's data.

Closure retention prevention. When the persister is cleaned up - for example, during logout or org switching - we explicitly null out the worker's onmessage and onerror handlers before calling terminate(). Without this, closures capturing the persister context would be retained by the worker reference, preventing garbage collection of the entire context object graph.

Cache versioning. A cache buster string is embedded in every persisted shard. When we make incompatible changes to query shapes or normalization logic, we increment the version. On restore, if the buster doesn't match, the entire cache is discarded and the app starts fresh.

Feature-flag control. Every tunable parameter - shard count, queries per shard, max age, number of MFU shards to block on - is controlled by a feature flag. This gave us gradual rollout, per-org tuning, and instant rollback without a deploy.

The numbers

These are the production guardrails we run with today:

These numbers aren't magic. They're guardrails. The important thing isn't the exact values. It's that restore cost is bounded, predictable, and enforced - not a function of how much data the application has accumulated over time.

Results

After rolling this out across production, measured on modern machines, stable over 4+ weeks:

LCP P75 dropped from 7 seconds to under 2.5 seconds on full page reloads. The improvement was stable because restore cost is bounded by design, not by how much data happens to be cached.

INP P75 returned to under 150ms - back to pre-caching baseline. Before the optimization, any interaction that triggered a cache write (navigating, receiving socket events) would block the main thread for 1-3 seconds during structured clone serialization. After moving all heavy work to the Web Worker, no long tasks exceed 50ms during persist or restore. The loader animation no longer freezes.

Restore memory footprint dropped by ~6x. Without selective restore, the app would hydrate 8,000-10,000 queries into memory on reload - the entire persisted cache. With tiered restore, we load at most 1,000-1,500 queries even in the worst case: the critical shard, top 3 MFU shards, and route-specific data. That's roughly one-sixth of total persisted queries, and it's enough to achieve LCP on every page. The in-memory cache lives for 5 minutes (React Query's default gcTime), while the on-disk cache lives for 48 hours - independent lifecycles with independent costs.

This is not a weekend project. The architecture took two engineers roughly three months end-to-end - design, build, production hardening included. The sharding logic, the worker message queue, the MFU ranking, the first-read cache precomputation - each is individually straightforward, but the system as a whole requires ongoing maintenance as query shapes and usage patterns evolve. That trade-off was worth it for our scale. For smaller apps, React Query's built-in persistence is almost certainly sufficient.

What we learned

Here's what stuck with us after three months of building, breaking, and fixing this system:

Decompose LCP before optimizing it. The bottleneck isn't always where you assume. For us, rendering was fast. Data availability was slow. Optimizing the wrong stage wastes effort without moving the metric.

Async I/O does not mean free CPU. IndexedDB is asynchronous, but structured clone serialization runs on the main thread. Any time you move large object graphs through postMessage, IndexedDB, or any API that uses structured clone, you're paying a synchronous CPU cost that scales with object complexity - even though the I/O itself is non-blocking.

The cost model matters more than the implementation. Shifting persistence from O(cache size) to O(changed queries) is what made it scale. Shifting restore from O(total stored data) to O(data needed for this page) is what made it fast. The specific technologies - IndexedDB, Web Workers - are interchangeable. The cost model is the design.

Web Workers aren't just for heavy computation. We don't do anything computationally exotic in our worker. It merges objects, writes to IndexedDB, sorts arrays. What matters is that none of this work happens on the main thread, at times when users are trying to interact with the application.

Build for restore-time control, not storage optimization. Sharding without selective restore is just added complexity. The value of sharding is entirely in the ability to load exactly what you need and leave everything else on disk.

The client is a system. Treat it like one. When SSR isn't viable, the architecture of your client-side data layer determines your performance ceiling. Caching, persistence, and restore aren't afterthoughts - they're infrastructure.

Related Articles

Alok Mishra

Ahmed Bashir

Akanksha Deswal

Nimit Savant

Computer+ Apps

Our customers

Resources

Initiatives