Chatbot vs Conversational AI: why the answer is neither

9 min read

—

A chatbot is a software program that simulates human conversation using scripted rules or AI.

Conversational AI is the broader technology – NLP, machine learning, and dialogue management – that powers modern chatbots.

In 2026, both are giving way to agentic AI: systems that don't just converse, but reason, decide, and take autonomous action. Most teams searching this comparison are asking the wrong question. The real question isn't which to choose between a chatbot and conversational AI – it's whether either one can actually close a ticket.

TL;DR

AI made everything faster. But it didn't make anything clearer. Most teams searching "chatbot vs conversational AI" are asking the wrong question. The real question is whether either one can actually close a ticket. Neither can.

Gen 1 chatbots follow scripts and handle a fixed set of known questions. When a user goes off-script, they hit a wall.

Gen 2 conversational AI understands intent and generates natural responses. It's a real improvement. But it's still read-only: it surfaces answers, drafts replies, and hands everything to a human to act on.

Gen 3 agentic AI reads, reasons, and writes back. It doesn't suggest a refund. It processes it.

The architectural difference comes down to one question: can your AI write back to your CRM, close a ticket, and update your engineering tools without a human completing each step?

BILL, a financial operations platform serving six million businesses, had a real self-serve resolution rate of 3% before deploying Computer. After, it reached 70%+ of L1 tickets resolved without any human involvement. That is not an improvement on conversational AI. It is a different category.

What is a chatbot?

Most people have interacted with a chatbot without realizing they were talking to two very different kinds of systems. The term covers a lot of ground.

Rule-based chatbots operate on decision trees and keyword triggers. They're effectively an IVR phone menu in text form – fast to deploy, good at handling a fixed set of known questions, and brittle the moment a user goes off-script. Ask it something unexpected, and it either loops back to the menu or hands off to a human.

AI chatbots use NLP and machine learning to understand intent and generate contextual responses. They're meaningfully more flexible. A user doesn't have to phrase the question exactly right. The chatbot can interpret what they mean, not just what they typed.

But here's the limitation that applies to both: they can understand what you're asking. Neither can do anything about it.

They can tell a customer their order is delayed. They can't fix the delay, update the record, or move the ticket forward. That requires a human completing the loop every single time.

For teams with low ticket complexity and a primary goal of reducing inbound volume, that ceiling may feel acceptable. But consider what it actually means at scale: every single resolved issue, no matter how routine, requires an agent to read a suggestion, switch to another system, take the action, and close the loop manually. That overhead compounds.

It's why support teams that invest in Gen 2 tools see deflection rates improve slightly and resolution rates stay flat. Deflection means the customer accepted an incomplete answer or gave up. Resolution means the issue is closed. The gap between those two metrics is where agent time disappears.

What is conversational AI?

Conversational AI is the underlying technology stack, not a single product. It's the set of capabilities – NLP, NLU, NLG, dialogue management, and ML – that power modern chatbots, voice assistants, and AI copilots.

- NLP (natural language processing) – parses and interprets language

- NLU (natural language understanding) – identifies intent behind the message

- NLG (natural language generation) – constructs a coherent, contextual response

- Dialogue management – maintains context across multiple turns in a conversation

- ML – improves the system's accuracy over time through interaction with data

It's worth clarifying the relationship: a chatbot is one application of conversational AI. Voice assistants like Alexa and Siri, AI copilots, virtual agents, and conversation assistants are others. Not all chatbots are conversational AI – some are still purely rule-based.

The types of conversational AI you're most likely to encounter in a business context:

- Text-based chatbots

- Voice assistants

- AI agent assist / copilot tools

- Virtual agents

Conversational AI is a real leap from rule-based chatbots. It handles context, manages multi-turn conversations, and produces natural responses that don't feel like they came from a decision tree. But it's still fundamentally a conversation tool. It answers. It doesn't resolve.

Chatbot vs conversational AI – the key differences

The pattern is the point: each generation is defined by what it can't do. Chatbots can't understand context. Conversational AI can't take action. Agentic AI removes both ceilings.

Understanding the architectural difference between chatbots and AI agents comes down to one question: is the system read-only, or can it read and write? Everything else – resolution rates, memory, learning – follows from that answer.

Is either one enough?

You came here to choose between a chatbot and conversational AI. But in 2026, that's like choosing between a typewriter and a word processor when everyone else has moved to collaborative docs.

The more useful question isn't which generation of conversational tool is better. It's whether either one was ever designed to resolve. Both share three ceilings that no amount of LLM fine-tuning can close:

1. Read-only architecture

Both chatbots and conversational AI retrieve information from a knowledge base. They can surface an answer, draft a response, or suggest a next step. What they can't do is act on it. They can't update your CRM, close the ticket in your helpdesk, create a Jira issue, or process a refund. Every loop has to be closed by a human. If an agent can't write back, it's a glorified search bar – regardless of how fluent the conversation feels.

2. Deflection ≠ resolution

Deflection usually means the customer gave up, accepted an incomplete answer, or was handed off to a human. It doesn't mean the issue was resolved.

Before deploying Computer, BILL's real self-serve resolution rate sat at 3%. After deploying Computer, they achieved an AI resolution rate of 70%+ of L1 tickets, without a human in the loop.

That gap isn't a chatbot problem or a conversational AI problem specifically. It's an architecture problem. The common customer support challenges that plateau support teams – repeat contacts, low first-contact resolution, escalation overhead – are almost all downstream of this single constraint.

3. Stateless or session-only memory

Chatbots have no memory. Conversational AI remembers within a session. Neither carries context across channels. A customer who chats on Monday and emails on Wednesday starts over both times. They repeat their context, their history, their issue – not because they're impatient, but because the tool was never designed to remember them.

Across production deployments, the most consistent agentic AI failure mode isn't the AI itself. It's the data architecture underneath it. The conversation layer is a solved problem. The memory and action layers are where Gen 1 and Gen 2 tools run out of road.

The question isn't chatbot vs conversational AI. The question is: can your AI write back?

What agentic AI does differently

Gen 3 doesn't improve on the conversational AI model. It's a different architecture.

Where chatbots and conversational AI are read-only retrieval systems, agentic AI is a read-write reasoning system – one that connects to your data in real time, reasons across systems, and acts on it. The progression looks like this:

- Gen 1 chatbots live in Search – keyword match to a FAQ article

- Conversational AI (Gen 2) lives in Answers – LLM generates a contextual response

- Agentic AI (Gen 3) lives in actions + accurate answers – reasons over a knowledge graph, writes back to systems, closes the loop

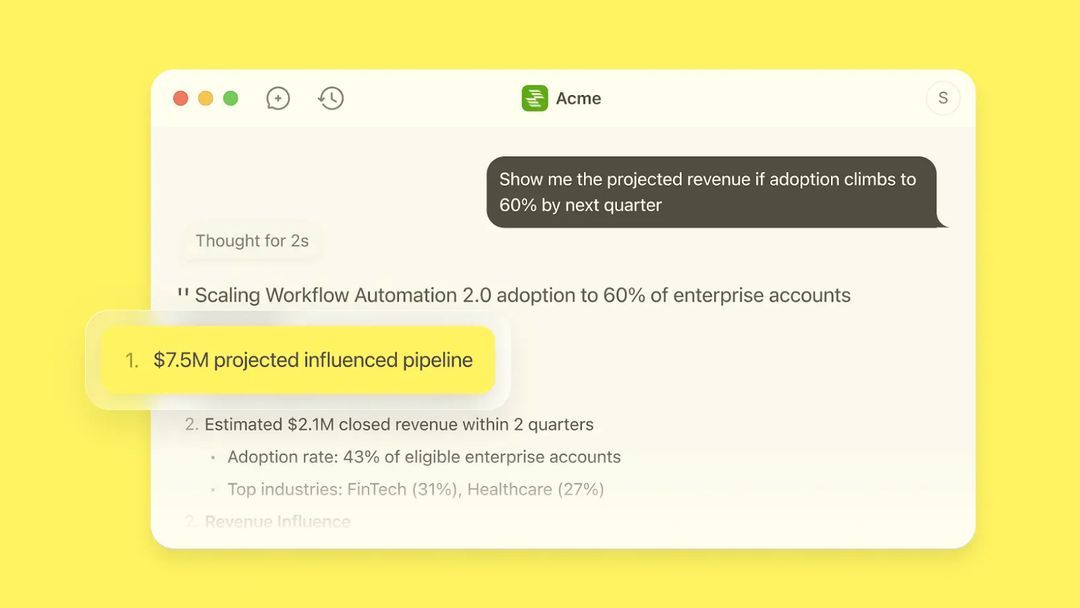

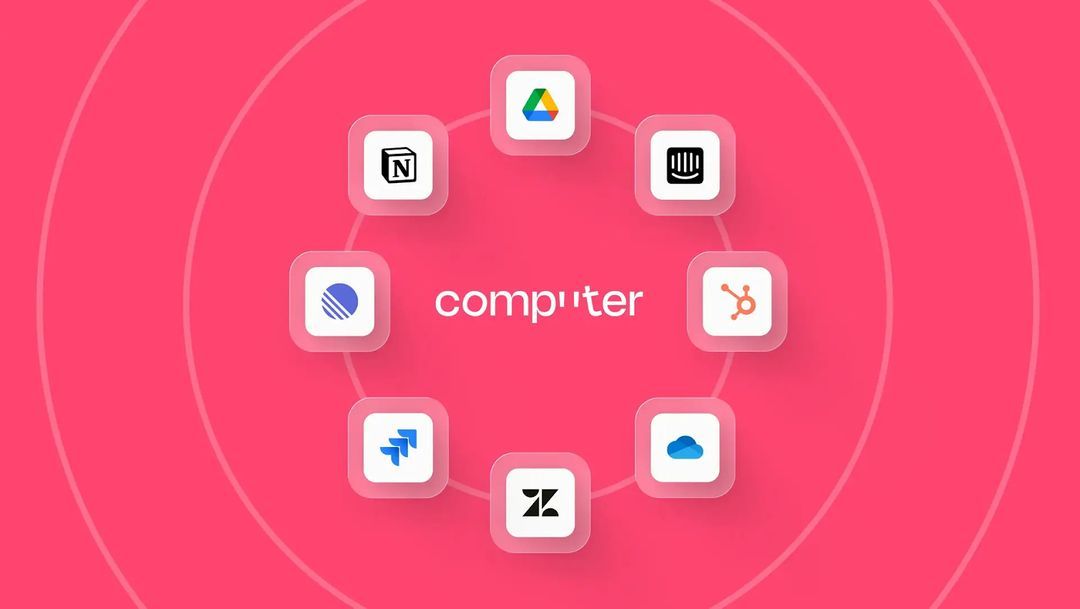

Computer, by DevRev, is built on the third architecture. Four components work together in the way a real teammate would:

Computer Memory is a live, permission-aware knowledge graph that connects customers, products, tickets, and engineering work into a single, connected layer. It is not a static knowledge base that a team has to maintain manually. Every resolved interaction feeds back into the graph automatically, so the system gets more accurate with every ticket it handles.

It is what makes Team Intelligence possible: every agent and team member works from a shared, current picture of every customer, not isolated session logs from a single channel.

AirSync is how Computer stays connected to the rest of your stack. It maintains real-time 2-way sync with Salesforce, ServiceNow, Jira, Slack, email clients, and more. Not API reads that pull data in on a schedule. AirSync reads and writes in real time, meaning every action Computer takes is reflected across every connected system immediately, with permission checks and full audit trails at the data layer.

When Computer closes a ticket, updates a CRM record, or creates an issue in your project management tool, every connected system knows about it instantly.

With Computer, a customer who contacts support on Monday and follows up by email on Wednesday does not start over. Computer holds the full context of both interactions without the customer repeating themselves. Not as a chat log sitting in one tool, but as a living customer record that every agent and team member can access the moment they need it, across every channel.

The three-way scenario that shows what this means in practice:

Results from teams running Computer in production:

These aren't chatbot or conversational AI numbers. They're what customer service automation looks like when the underlying architecture can actually close the loop.

Which should you choose?

Most teams pick the wrong tool because they're solving for the wrong metric. Here's how to pick right.

Choose a chatbot (Gen 1) if:

- Your use case is simple: FAQ deflection only

- Your support ticket volume is under 50/day

- You need a fast, low-cost solution for a well-defined, stable set of questions that rarely change

Choose conversational AI (Gen 2) if:

- You need multi-turn, contextual conversations with nuanced intent handling

- Your team wants AI copilot assistance rather than full automation

- You're already in a chat-first ecosystem and want AI to assist agents, not replace L1 handling

Choose agentic AI (Gen 3) if:

- Your agents spend more than 30% of their time on L1 tickets that follow predictable patterns

- You need AI that acts, not just answers

- You want resolution rates, not deflection rates

- You need 2-way sync across your existing tech stack

The decision framework for evaluating any AI agent ultimately comes back to the same five questions:

- Can it act end-to-end?

- What data architecture powers it?

- What does its memory layer actually hold?

- Can it trace entity relationships?

- What happens when a lower-permission user asks a sensitive question?

Tools that pass all five are Gen 3.

Stop deflecting and start resolving. See what Computer can do for your team: Book a demo

Frequently Asked Questions

Related Articles

Krithika Anand

Mathangi Srinivasan

Sayali Kamble

![Customer data protection: 12 ways to keep your data safe [2025]](https://cdn.sanity.io/images/umrbtih2/production/5015bdfe66cd100dc2a2d389b9c40f1cf8d2f051-2016x1008.webp?w=672)

Stalia

Computer+ Apps

Our customers

Resources

Initiatives