How to build a Product-Led Growth (PLG) operating system?

7 min read

—

Madhukar Kumar

Category

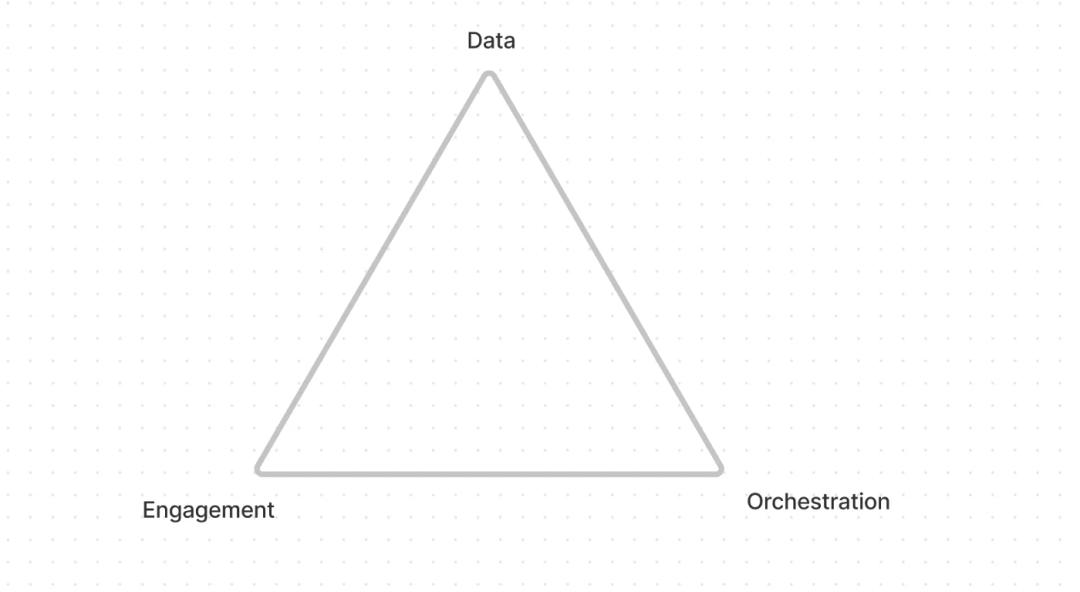

The three-legged PLG architecture

I have written about the “Why” of Product-led Growth (PLG) and some fundamental principles in action in my previous blogs. In this article, let’s look at the “What” and the “How.” In other words, how do we go about building an operating system that powers PLG?

Before we get into the architecture details, though, I like to start with defining what problems we are trying to solve and, consequently, the requirements to solve those problems. In the end, as PLG practitioners, we are looking to drive more users to discover our product, become users of the free tier or trial, and eventually, become long-term customers.

In my mind, to build an operating system that helps us achieve this, there are three main requirements:

- A website that is easy to update and manage that you can use effectively to engage with your users and customers.

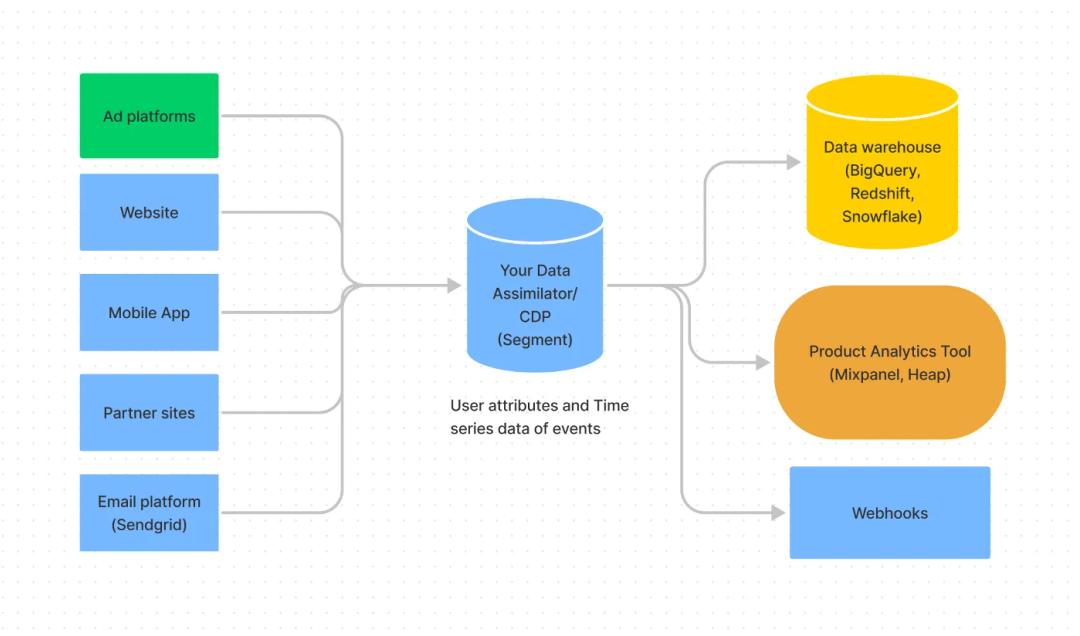

- A single and real-time view of visitors and customers and their activities.

- A way to orchestrate actions based on users’ activities and attributes.

Three legs of a PLG architecture

Website

If we further decompose the requirements for a website, I feel the following ones are a must:

- A fast website where changes can be made as many times as possible in a day without downtime, whether these changes are code fixes and improvements by the developers or content changes made by the marketing team.

- An automated workflow to review, test and publish the changes with the ability to roll back on an on-demand basis.

- Finally, a way for website visitors and customers to talk to humans when they need help on an on-demand basis.

In my past experiences, I struggled with Content Management Systems (CMS) that most marketing organizations use because it becomes increasingly difficult to make quick changes over time. I remember having discussions with web marketing teams that required three-four weeks of lead time even for creating and rolling out a simple landing page. This is primarily because there is little separation between code and content, both managed by two different sets of teams with different skill sets that leads to complexity around deployment. In addition, as the tech stack gets bulkier over time, the performance of the web pages starts to suffer.

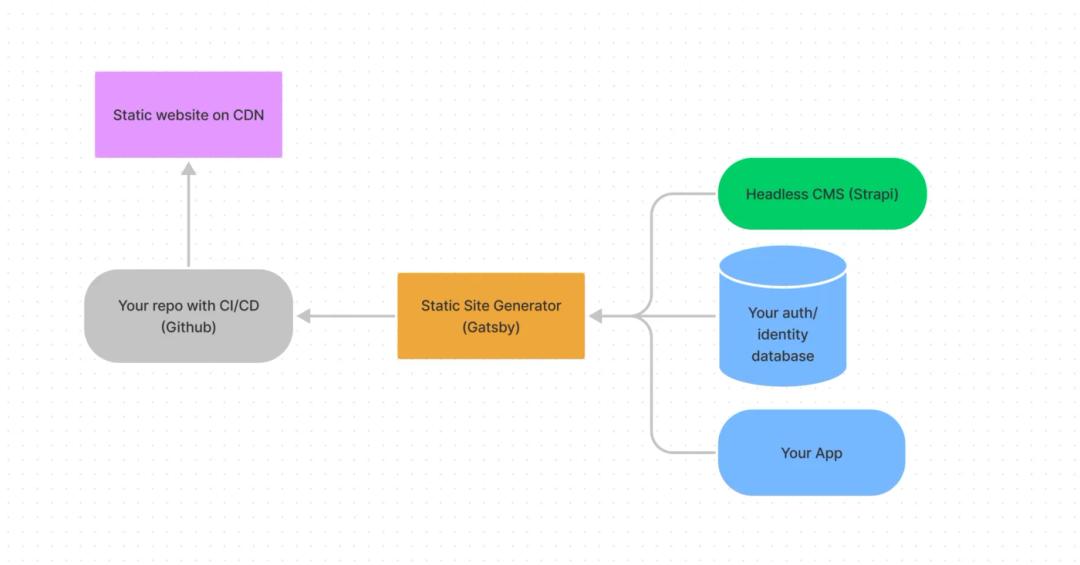

To solve for separation between code and content, you can use a headless CMS to store all your content with a visual UI allowing content writers to make agile changes without requiring any development skills. There are quite a few headless CMSes out there, but the one we at DevRev love is an open-source project called Strapi. It is a NodeJS based app that you can use out of the box with easy-to-use UI and support for Markdown and effectively create content types within minutes.

When it comes to the rest of the web stack that consumes the content from the headless CMS, I am a big fan of the JAM (Javascript, API, and Markup) stack. There are several good frameworks for this architecture, like Hugo, Jekyll, NextJs, and my personal favorite, Gatsby.

Some of the JAM Stack choices

At DevRev we settled on using Gatsby because it is a NodeJS based framework that allows you to write your entire site in React, and at build time, Gatsby creates static HTML, CSS, JS files. There are some significant advantages with this architecture-

- Hosting these files is dead simple — you can take the output files and dump them in an S3 bucket or host it on an extremely fast web server or use a CDN deployment like Fastly.

- Since the website is a bunch of static files, the performance is crazy fast, which is also great for SEO. In addition, with JS includes you can easily integrate with systems like Intercom that enable conversational functionalities on your website.

- If your code is on Github, you can create a build time pipeline that does an automatic build and deployment of your entire site. We have set this up so that when a Pull Request (PR) is approved, it kicks off a build and deploys the site only when the build is successful. This also enables a zero-downtime deployment,

- Separation of code and content — You can make REST calls to your headless CMS to grab all content at build time. This means your developers can just focus on code (Gatsby) while your content writers can work on content through UI or Markdown. When a change is made either in code or content, you can trigger a workflow to kick off a deployment so your website can up be updated as many times as you want in a day with zero downtime.

- Finally, since the site is built of React components, you can still create dynamic functionalities by making API calls to your internal systems or using Server-Side Rendering (SSR) functionalities.

Some of the JAM Stack choices

At DevRev we settled on using Gatsby because it is a NodeJS based framework that allows you to write your entire site in React, and at build time, Gatsby creates static HTML, CSS, JS files. There are some significant advantages with this architecture-

- Hosting these files is dead simple — you can take the output files and dump them in an S3 bucket or host it on an extremely fast web server or use a CDN deployment like Fastly.

- Since the website is a bunch of static files, the performance is crazy fast, which is also great for SEO. In addition, with JS includes you can easily integrate with systems like Intercom that enable conversational functionalities on your website.

- If your code is on Github, you can create a build time pipeline that does an automatic build and deployment of your entire site. We have set this up so that when a Pull Request (PR) is approved, it kicks off a build and deploys the site only when the build is successful. This also enables a zero-downtime deployment,

- Separation of code and content — You can make REST calls to your headless CMS to grab all content at build time. This means your developers can just focus on code (Gatsby) while your content writers can work on content through UI or Markdown. When a change is made either in code or content, you can trigger a workflow to kick off a deployment so your website can up be updated as many times as you want in a day with zero downtime.

- Finally, since the site is built of React components, you can still create dynamic functionalities by making API calls to your internal systems or using Server-Side Rendering (SSR) functionalities.

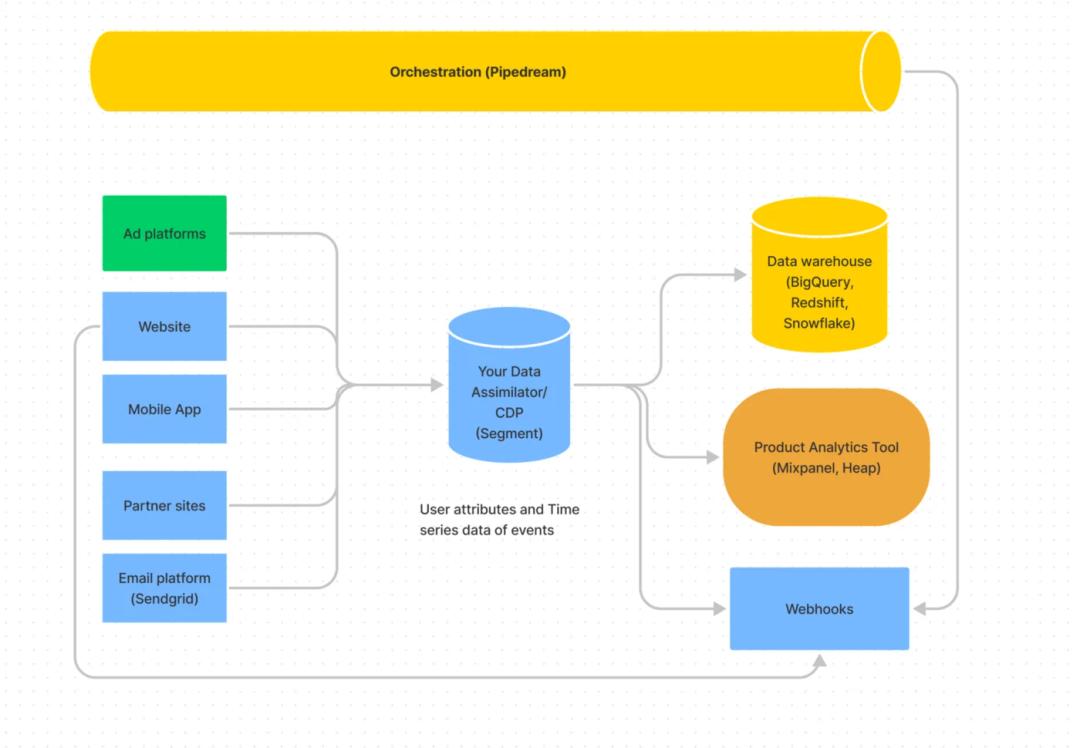

Data flow architecture with a Time series data assimilator

Orchestration

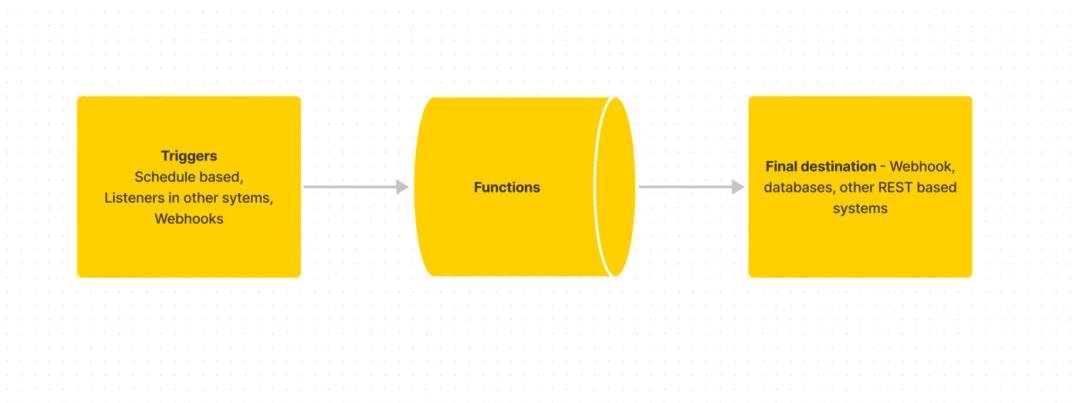

Finally, the last piece of the puzzle is to be able to perform automated, scalable actions based on some rules you can create on your own. Typically, you have a rules-based task engine in email and marketing automation tools like Marketo. However, those rules are specific to the data available only on those platforms. Ideally, you want one rules engine to act on data collected across multiple systems like your website, app, ad platforms, etc.

The three pillars of an orchestration engine

There are a few choices here that allow you to listen to events, have triggers that kick off the action, and the action itself, which is a simple lambda function-like entity.

For us, we have been using a NodeJS based system called Pipedream. It allows us to listen to webhook events and run a function that uses data changes or schedules as triggers. With the web, data, and orchestration now in place, the architecture looks like this.

The complete picture

Summary

In summary, a tech stack or OS for PLG architecture requires an extremely agile website, a good data strategy, and a flexible orchestration engine. Once you have these in place, you can view, nudge, and deliver personalizations in real-time to drive growth for your product. One of the things we did not cover in this blog is

where does your traditional CRM fit in this picture or even how to create real time dashboards and reporting based on this architecture. Stay tuned as we cover those in future blogs.

Related Articles

Anirudh Shenoy

Michael Machado

Shreya Talekar

Akhil Kintali

Computer+ Apps

Our customers

Resources

Initiatives